Data Engineering

End-to-End Data Engineering Capabilities Across the Data Lifecycle (Snowflake, Informatica, Databricks)

From Strategy – to – Governance Capabilities, we delivers complete Snowflake, informatica and Databricks services at enterprise scale.

Strategy & Roadmap

- Cloud data strategy and operating model definition

- Use-case prioritization across Analytics, ML, and GenAI

- Phased, domain-led migration and modernization roadmap

- Cost optimization and value realization planning

Foundation & Architecture

- Landing Zone, CI/CD Patterns

- Data Modelling (Medallion)

- Integration Pattern (Batch, CDC, Streaming)

Data Eng. & Modernization

- Large-scale ingestion using RDBMS, Mainframe, Files, APIs, and Cloud sources

- Batch, Streaming, and CDC ingestion patterns

- ELT pipelines, orchestration, and business rule implementation

- Snowflake integration patterns (Batch, CDC, Streaming)

- Performance tuning and optimization

Analytics & Data Products

- Curated datasets, Semantic Layer

- Data Marts, KPI Dashboards, Self-Service Analytics

- Data Sharing, Secure Collaboration

Data Quality & Observability

- Profiling, Reusable DQ Rules (DMF’s)

- Data Ownerships & Accountability

- SLA Tracking, Stewardship Review

- Cleansing, deduplication, validation, and anomaly detection

Governance & Compliance

- Catalogue, Lineage, Glossary, Tagging

- Data Security – RBAC, Masking

- Regulatory Controls (GDPR, HIPAA, BCBS239)tewardship Review

Expertise in Snowflake

Data Architecture & Modelling

- Medallion / Data Layer Architecture

- Dimensional Modelling

- Curated data products for BI

- Auto Sizing, Scaling of VW’s

Data Ingestion & Orchestration

- Snowpipe streaming, Streams, Tasks, Stages

- File storage integrations (S3/Blob/GCS)

- Advanced SQL, Dynamic Tables

- Stored Procedures (Python, JavaScript)

Performance & Cost Optimization

- Query profiling and tuning

- Warehouse optimization, Automatic Clustering

- Pruning strategies, Resource Monitor

- Warehouse policies & Usage analysis

Data Protection & Recovery

- Time travel and Data recovery

- Zero-copy cloning for fast & safe testing

- Data retention settings

Data Quality & Observability

- Data profiling, Exception management

- Reusable DMFs & DQ checks monitoring

- Metadata driven controls & Stewardship reporting

Governance, Security & Compliance

- RBAC & Role hierarchy

- Masking policies, tag-based policies

- Network policies, SSO/SAML/OAuth

- Auditing via ACCOUNT_USAGE

Snowflake Apps

- Native interactive app-Streamlit

- dBt, Airflow Orchestration, CI/CD deployment

- BI Tools – live query for real-time updates

Snowflake AI & ML Models

- Cortex AI Services (LLM functions, Agents)

- Document AI Models (extraction / classification)

- Trust Centres

Snowflake Data Sharing

- Outbound secure data sharing with RBAS and secure views

- Configure inbound sharing & integrate into curated datasets

- Publish governed datasets as listings

Expertise in Informatica

Data Architecture & Modelling

- Datawarehouse & mart design (Bronze / Silver / Gold layers)

- Data modelling

- Batch & streaming ingestion patterns

- Scalable transformation design

ETL & Orchestration

- Informatica mappings, session, workflows

- Incremental processing & CDC patterns

- Pipeline observability & failure handling

- Integration with cloud storage (S3 / ADLS / GCS)

Data Protection & Recovery

- Discovery and Classification

- Data Masking and Encryption

- Disaster Recovery Strategies for data restoration and business continuity

Machine Learning & AI Enablement

- Feature engineering & ML pipelines

- Model training, tracking & deployment (ML flow)

- Support for AI/ML and GenAI use cases

- End-to-end ML lifecycle enablement

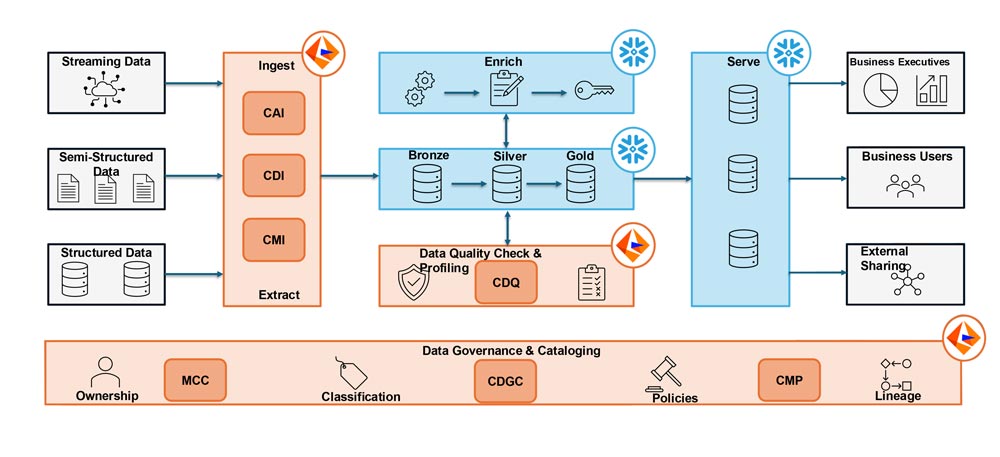

Data Ingestion & Reporting: (Leading US Based CDMO: CURIA)

PROBLEM STATEMENT

- Data onboarding did not scale across sources and teams

- Data quality was inconsistent and unmanaged

- End-to-end lineage was not provable

- Data consumption was siloed across departments

OUR SOLUTION

- Standardized batch, CDC, and event-driven ingestion using CAI, CDI, and CMI for scalable, re startable pipelines.

- Implemented Bronze–Silver–Gold layers to enforce traceability, governance, and analytics-ready data.

- Embedded profiling-driven quality checks with reusable rules and actionable exception handling.

- Enabled end-to-end lineage, ownership, and policy enforcement through CDGC/CMP.

- Delivered federated, domain-owned marts from curated layers to ensure a single version of truth.

KEY BENEFIT

As a result, the client reduced data onboarding from weeks to days, improved trust through enforceable quality controls, increased performance by shifting transformations to Snowflake, achieved audit-ready traceability with end-to-end lineage, and aligned all departments on consistent KPIs from shared curated datasets.

From Fragmented Data to a Trusted, Analytics-Ready Lakehouse

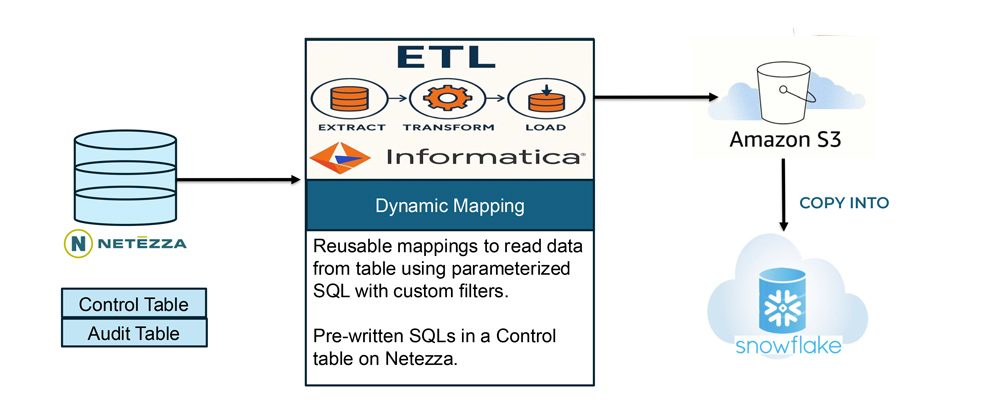

Data Migration: (Leading US Bank: USAA)

PROBLEM STATEMENT

- Large-scale migration risk: ~100 TB of critical banking data needed to move to Snowflake in less than 6 months.

- Zero-tolerance for errors: business metrics and reporting had to remain identical during cutover.

- Operational complexity: hundreds of tables made manual or bespoke migrations unviable.

- Execution risk: required a repeatable, low-risk approach to avoid delays and rework.

OUR SOLUTION

- Delivered a ~100 TB Netezza-to-Snowflake lift-and-shift migration, maintaining full functional equivalence during cutover.

- Managed scale and risk through parallel loading, incremental deltas, and rigorous reconciliation controls.

- Implemented a metadata-driven dynamic ingestion framework, enabling hundreds of tables to migrate using a small set of reusable mappings.

- Established a future-ready foundation, positioning the platform for Snowflake-native ELT optimization and long-term maintainability.

KEY BENEFIT

As a result, the client achieved a low-risk, on-schedule migration, significantly reduced engineering effort, faster execution during cutover, and a Snowflake foundation ready for scalable optimization and future growth.

Netezza to Snowflake Migration using Informatica

Lift and Shift Migration (close to 100 TB data)

Why OpTech?

Enterprise IT Solutioning Experience

- We have more than 550 combined years of experience delivering complex data engineering solutions

- Strong foundation in enterprise architectures, cloud, and data platforms

- Proven ability to translate business needs into scalable technical solutions

Certified Snowflake, Informatica and Databricks Professionals

- We have a team of 19 certified and experienced Snowflake and Informatica engineers

- Hands-on expertise across strategy, migration, optimization, and operations

- Deep understanding of analytics, performance, and cost governance

Skilled & Motivated Delivery Teams

- Our team of close to 45+ data engineers Carefully selected, highly skilled professionals helps us deliver the most critical projects in record time.

- Strong ownership mindset and delivery accountability

- Continuous learning culture across Snowflake, Databricks, and cloud platforms